Pre-Puff Process

Overview

The Pre-Puff process is a specialized weighing and tracking system designed to record the weight of pre-puffed expandable polystyrene (EPS) bags as they are packaged for customer orders.

This interface allows operators to select their station and employees, monitor scale readings in real-time, and manage the completion and shipment details of the pre-puff jobs.

Web Application Interface

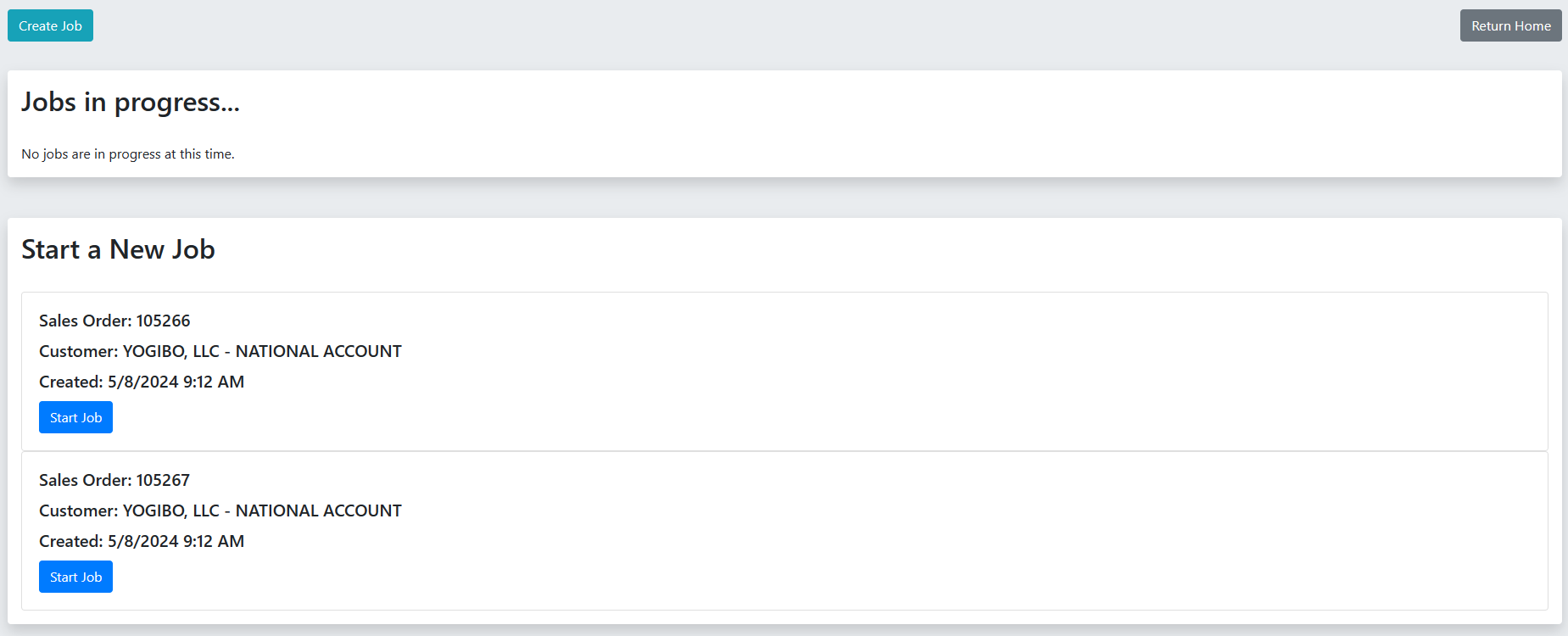

Job Management Page

Page Location: /prepuff/add_prepuff

The job management page displays all available Pre-Puff jobs created by administrators.

- Start a New Job: Lists new jobs with the Sales Order, Customer, and Created Date. Click the Start button to begin.

- Jobs in progress...: Lists jobs that have already been started but not finished. Click the Resume button to continue a paused or transitioned job.

Starting or Resuming a Job

When starting or resuming a job, you will be prompted to:

- Select Station: Choose the physical weighing station you are working at.

- Job Employees: Select the employees working on this job.

- Click Start Job to enter the "In Progress" view.

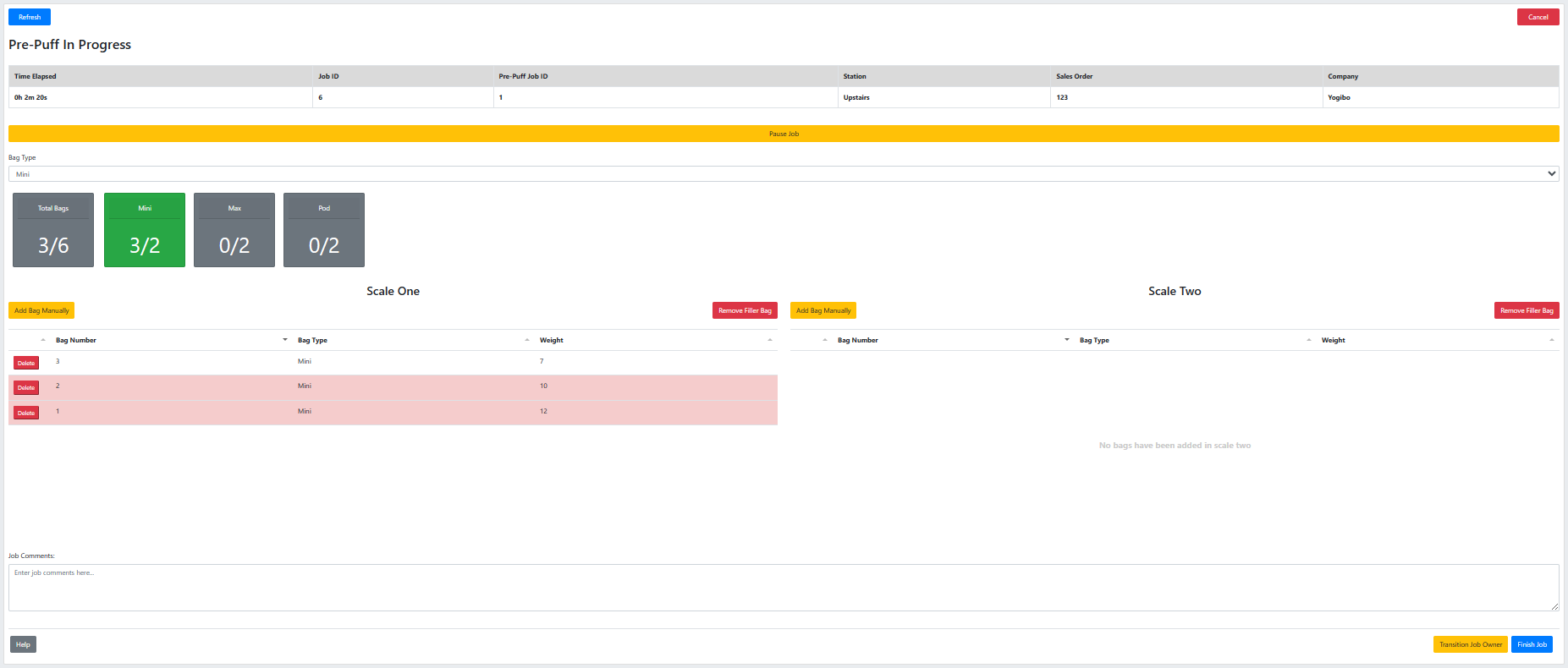

Active Job Monitoring Display

The "Pre-Puff In Progress" page is where the actual weighing process is monitored.

Job Details & Progress:

- Displays the Job ID, Station, Sales Order, and Company.

- Shows the Total Bags target and progress, as well as targets for specific bag types.

Bag Types: Use the Bag Type dropdown to select the type of bag currently being weighed. When the target for a bag type is reached, the system will prompt you to switch to the next type.

Scale Feeds: The page displays real-time feeds from Scale One and Scale Two.

- As bags are placed on the network-connected scales and the print button is pressed, the bag weights will appear in the respective scale tables.

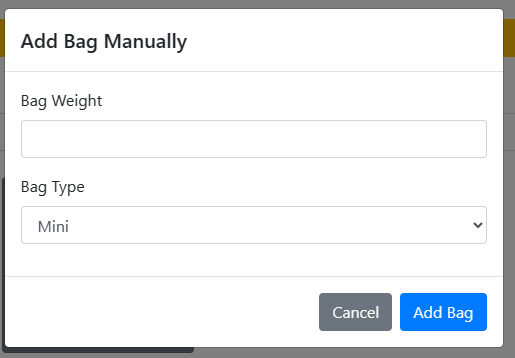

- Add Bag Manually: If a scale is malfunctioning or network connectivity drops, operators can use the "Add Bag Manually" button to manually input a bag weight.

- Remove Filler Bag: If a bag was weighed incorrectly, you can mark it as faulty to remove it from the total.

Job Actions

- Job Comments: Add or update comments on the job at any time.

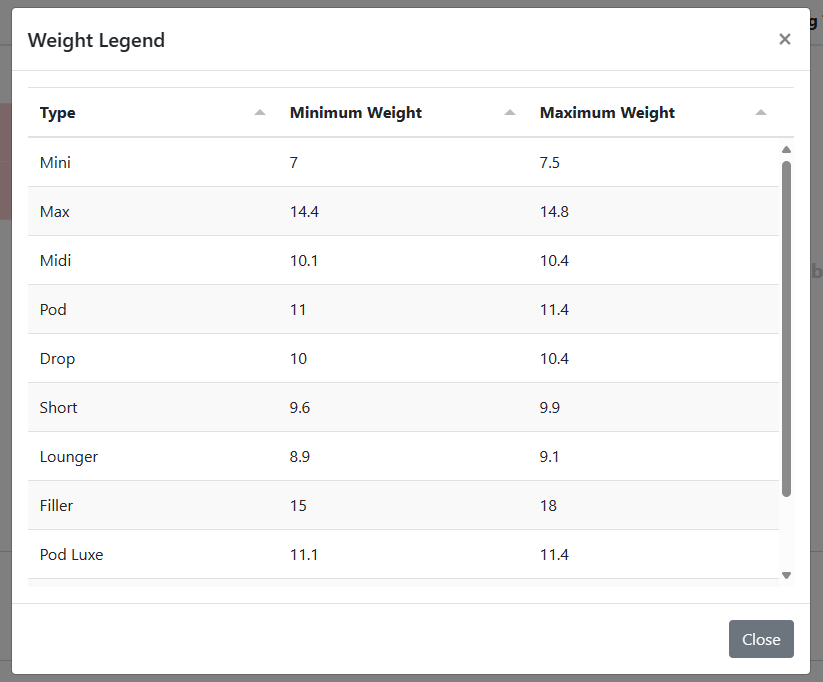

- Help / Weight Legend: Click the Help button to view the minimum and maximum acceptable weights for the currently assigned bag types.

- Pause Job: Pauses the job timer.

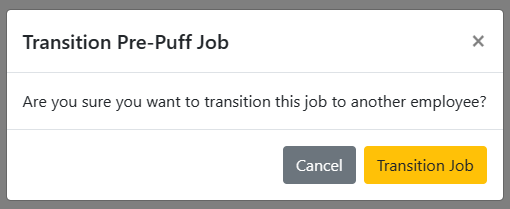

- Transition Job Owner: Saves your progress, logs your transfer time, and frees the station. Use this if you are leaving the station for a prolonged period or changing shifts.

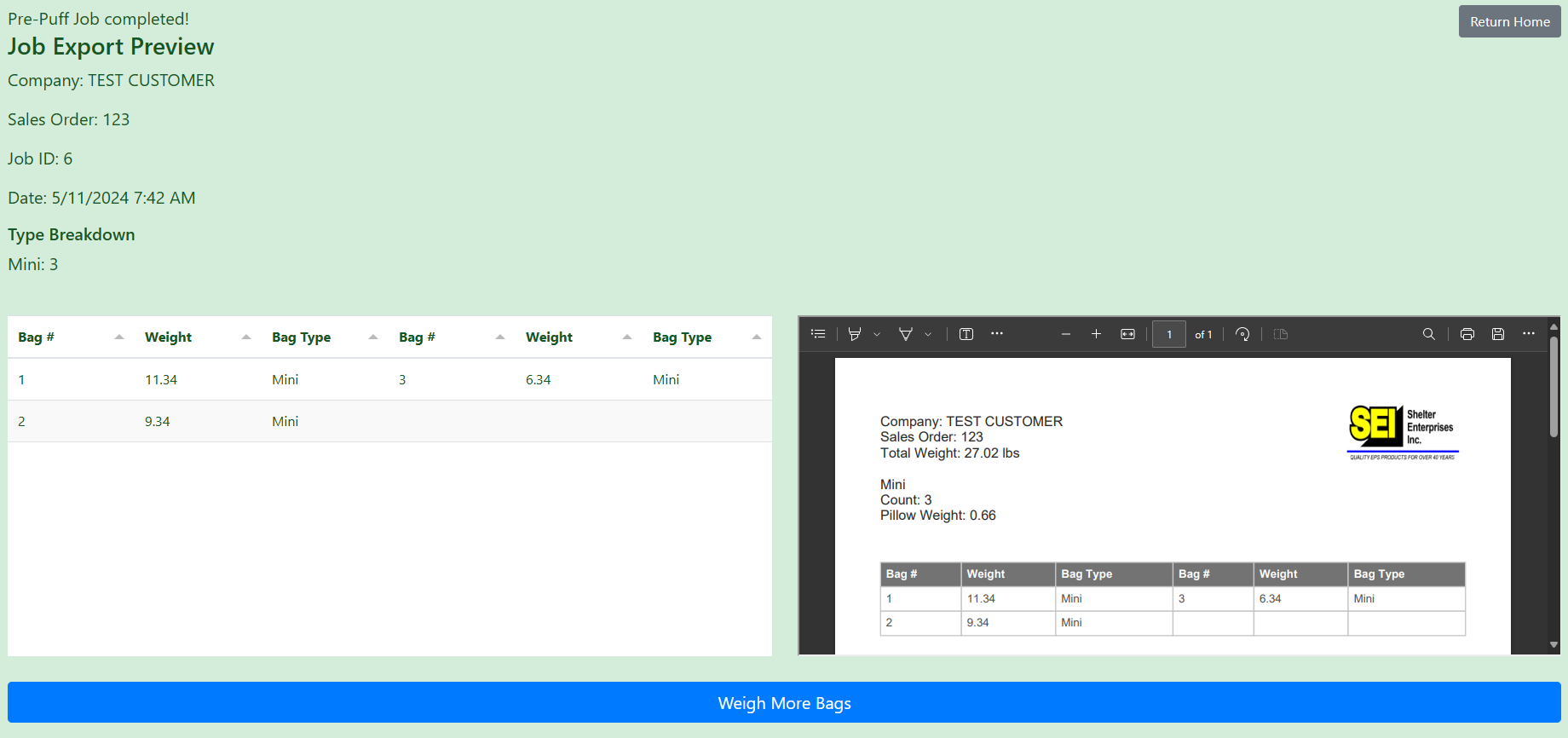

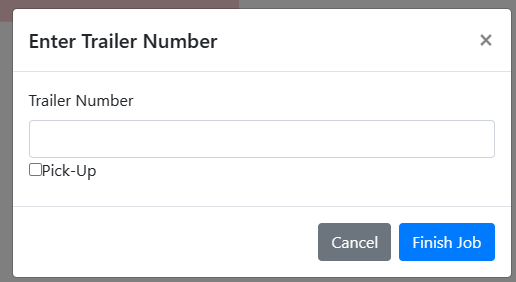

- Finish Job: Completes the job. You will be prompted to enter shipment details, such as selecting a Shelter Enterprises trailer, entering an Outside Carrier trailer number, or marking it as a Pick-Up order.

Scale Operator Workflow

Physical Scale Interface: Since the scales are network-connected devices sending data directly to the server, operators interact primarily with the scale's built-in display and buttons:

- Scale Display - Shows current weight in configured unit

- Print/Send Button - Triggers data transmission to server

- Tare/Zero Button - Zeros the scale between weighings

Operator Process at Weighing Station:

- Place the empty bag on the scale and press "Tare" to zero it.

- Fill the bag with pre-puffed material.

- Verify the weight is on target using the scale's display.

- Press the "Print" or "Send" button on the physical scale.

- The weight will automatically appear in the web interface under Scale One or Scale Two.

- Check the web interface - bag count increments.

- Repeat the weighing process until the quantity is reached.